OpenAI o3 is OpenAI’s most powerful reasoning model. Unlike regular AI models that just predict the next word, o3 actually thinks through problems step by step before answering. It pauses, reflects, plans, and uses tools like web search and Python code on its own. It set new records on nearly every major AI benchmark when it launched – including scoring 87.5% on ARC-AGI, a test that humans only score 85% on. In June 2025, OpenAI slashed o3’s price by 80%, making it one of the most accessible frontier AI models available today.

| About OpenAI o3 | Details |

|---|---|

| What is it? | OpenAI’s flagship reasoning model – successor to o1 |

| Announced | December 20, 2024 (during “12 Days of OpenAI”) |

| Released | April 16, 2025 (general availability) |

| Model Family | o3, o3-mini, o3-pro (most powerful), o4-mini (latest small model) |

| Context Window | 200,000 tokens |

| Knowledge Cutoff | June 2024 |

| Key Capability | Simulated reasoning – thinks step-by-step before responding |

| Tool Use | Web search, Python code, image analysis, image generation – all autonomous |

| API Pricing (June 2025 after 80% cut) | Input: $2/M tokens · Output: $8/M tokens · Cached: $0.50/M |

| Available In | ChatGPT (Plus, Pro, Team, Enterprise) + OpenAI API |

| o3-mini Pricing | Input: $1.10/M · Output: $4.40/M tokens |

| o3-pro Pricing | Input: $20/M · Output: $80/M tokens |

| o3-pro Released | June 10, 2025 |

| Why “o3” not “o2”? | OpenAI skipped o2 to avoid trademark conflict with UK carrier O2 |

Table of Contents

I remember reading about OpenAI o3 when it was first announced in December 2024. It was the final reveal of OpenAI’s “12 Days of OpenAI” event. And honestly? The numbers were so extreme I had to read them twice.

87.5% on a test, humans score 85% on. 69.1% on a software engineering benchmark. 20% fewer errors than its predecessor on real-world expert tasks.

These were not marginal improvements. These were the kind of numbers that made AI researchers sit up and say: something fundamentally different is happening here.

But here is what most articles about o3 get wrong. They focus entirely on benchmarks and skip the part that actually matters for you: what does this mean practically? What can you actually do with o3 that you couldn’t do before? Is it worth the money? Who should use it?

I have done a deep research dive into everything — the original OpenAI launch posts, the system card, the benchmark breakdowns, the pricing changes, the real-world testing from developers — and I’m giving you the most complete, honest explanation of OpenAI o3 you will find anywhere.

No hype. No “revolutionary AI.” Just the real picture.

What is OpenAI o3? A plain English explanation

OpenAI o3 is a reasoning model. That word “reasoning” matters more than it might seem.

Here is the simplest way I can explain it.

Most AI models – like GPT-4o, Gemini Flash, or Claude Haiku – are what you might call “fast responders.” You ask a question. They predict the most likely next word, then the next, then the next. They’re incredibly fast and surprisingly good. But they’re essentially working from pattern recognition. They don’t really “think” before they answer.

OpenAI o3 works differently. When you ask it something, it doesn’t immediately start generating words. It pauses. It thinks. It builds an internal reasoning process – sometimes taking seconds, sometimes minutes — where it works through the problem step by step before writing a single word of its answer.

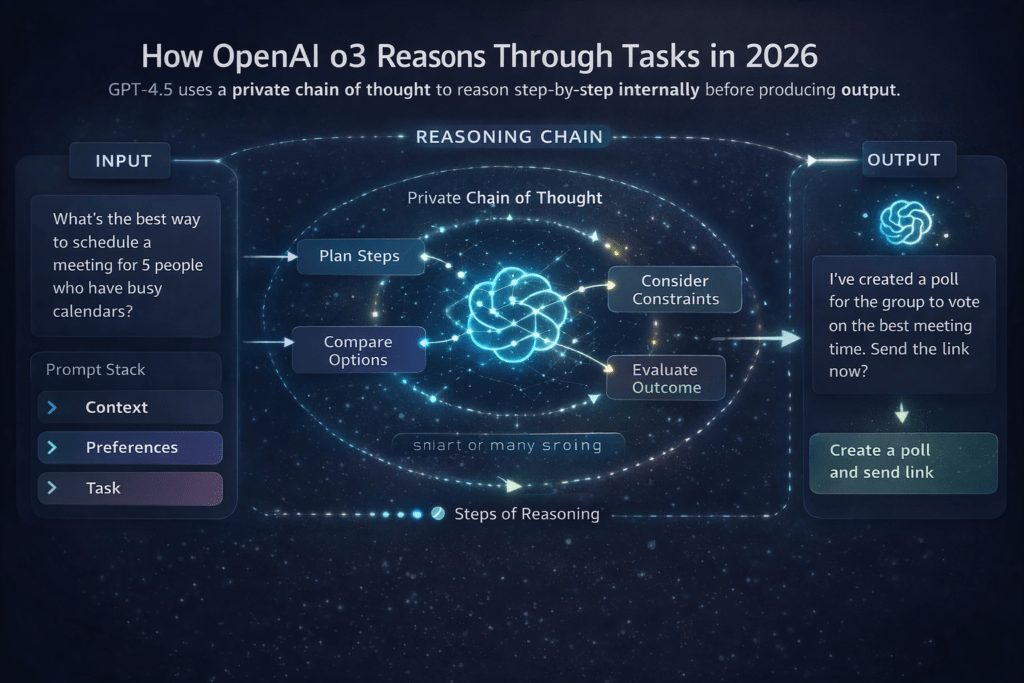

OpenAI calls this a “private chain of thought.” The model essentially argues with itself, checks its own logic, identifies weaknesses in its reasoning, and corrects itself – all before showing you anything.

The result is a model that is dramatically more accurate on hard, complex, multi-step problems. It hallucinates less. It makes fewer logical errors. It handles mathematics, code, and scientific reasoning at a level that genuinely competes with expert humans.

OpenAI o3 was announced on December 20, 2024, and became generally available on April 16, 2025. It is the successor to OpenAI o1, and it improves on it in almost every meaningful way. OpenAI skipped “o2” entirely to avoid a trademark conflict with the British telecom carrier O2.

How does OpenAI o3 actually think? Simulated Reasoning explained

This is the part most articles skip over. So let me actually explain it.

The technique is called simulated reasoning (SR). It is built on top of reinforcement learning — a training method where the model learns by getting rewards for good outcomes and penalties for bad ones.

Here is what happens when you send a message to o3:

Step 1 — Recognize complexity. o3 first evaluates how hard the problem is. Simple questions get a quick response. Complex ones trigger deeper reasoning.

Step 2 — Build a private chain of thought. For hard problems, o3 starts an internal reasoning process you don’t see. It breaks the problem into sub-problems, considers multiple approaches, runs mental simulations of different solutions, and checks each one for errors.

Step 3 — Use tools autonomously. Here is what makes o3 different from o1: o3 can decide on its own to use external tools mid-reasoning. It can search the web, run Python code, analyze an image, or generate a visualization — not because you told it to, but because it decided those tools would help it get to the right answer.

Step 4 — Self-correct. If o3’s internal reasoning produces an answer that doesn’t check out, it loops back. It corrects itself before presenting anything to you. This is why it makes 20% fewer major errors than o1 on expert-level tasks.

Step 5 — Deliver the answer. Only after all of this does o3 write the response you see. The “thinking” work is hidden — you just get the result. (With o3-pro, you can enable extended thinking mode to see more of the process.)

🧠 Why This Matters

Traditional AI models like GPT-4o are trained to be good at predicting text. o3 is trained to be good at solving problems. The distinction sounds subtle but it produces dramatically different results on anything that requires multi-step logic – math proofs, debugging complex code, scientific reasoning, legal analysis, and strategy work.

OpenAI o3 timeline – from announcement to today

| Date | What Happened |

|---|---|

| December 20, 2024 | o3 announced during “12 Days of OpenAI.” Benchmark scores stun the AI community. |

| January 10, 2025 | Safety researchers get early access for red-teaming and security testing. |

| January 31, 2025 | o3-mini released to all ChatGPT users — free tier gets first reasoning model access. |

| February 6, 2025 | o3-mini updated with more transparent reasoning display (in response to DeepSeek R1 pressure). |

| April 16, 2025 | o3 and o4-mini released for general availability on ChatGPT and API. |

| June 10, 2025 | o3-pro launches. o3 API price drops 80% — from $10/M to $2/M input tokens. |

| October 2025 | o3 Deep Research variant launched via API for long-form research tasks. |

| 2026 | o3 remains a flagship reasoning model. Successor models like o4-mini continue to evolve the o-series. |

Key features that make o3 genuinely different

Simulated Reasoning (Private Chain of Thought)

o3 thinks before it speaks. It builds an internal reasoning chain, tests its own logic, and self-corrects — all before generating output. This is what produces its dramatically better accuracy on hard problems. On expert-level real-world tasks, o3 makes 20% fewer major errors than o1, especially in programming, business consulting, and creative ideation.

Autonomous Tool Use — The Biggest Jump Over o1

This is the feature that genuinely sets o3 apart from everything before it. o3 is the first OpenAI reasoning model that can use tools autonomously — it decides when and how to use them without being told. It can search the web, run Python code, analyze images and charts, and even generate images, all as part of its reasoning process. It does not wait for you to say “search for this.” It decides on its own that searching will help it give you a better answer.

Visual Reasoning – Thinking With Images

o3 does not just “see” images the way older models do. It integrates visual information directly into its reasoning loop. It can rotate, crop, and analyze images mid-thought. It can look at a chart and reason about trends. It can examine a diagram and reason about what’s structurally wrong with it. This “thinking with images” capability is a genuine step beyond basic multimodal recognition.

Adjustable Reasoning Effort

You can control how hard o3 thinks through the “reasoning effort” setting — Low, Medium, or High. Low is faster and cheaper, great for simpler tasks. High makes o3 think longer and harder, which significantly improves performance on the most challenging problems. Moving from low to high effort typically improves accuracy by 10 to 30% on STEM tasks. This lets you balance cost and performance intelligently.

200,000-Token Context Window

o3 has a 200,000-token context window – enough to process entire codebases, long research documents, multi-chapter books, or years of business data in a single session. This is double what GPT-4’s original context could handle and makes o3 genuinely practical for enterprise-scale analysis.

Rebuilt Safety Training

For o3, OpenAI completely rebuilt its safety training data from scratch. They added new refusal prompts specifically for biological threats, malware generation, and jailbreaks. They also deployed a reasoning LLM monitor that successfully flagged about 99% of dangerous conversations during red-team testing. o3 represents OpenAI’s most rigorous safety program to date.

OpenAI o3 benchmark scores – the real numbers

Let me give you the actual benchmark numbers. Not because numbers are everything, but because these specific results tell a meaningful story about what o3 is capable of.

| Benchmark | Score | Description | Human / Previous |

|---|---|---|---|

| ARC-AGI | 87.5% | General intelligence benchmark | Humans: 85% |

| GPQA Diamond | 83.3% | PhD-level science | ~75% |

| SWE-bench | 69.1% | Software engineering tasks | 48.9% |

| AIME 2024 | 91.6% | Math competition | 74.3% |

| Codeforces ELO | 2706 | Competitive programming | 1891 |

| MMMU | 82.9% | Multimodal understanding | — |

Here is how to read these numbers meaningfully:

ARC-AGI at 87.5% is the one that genuinely shocked the AI research community. The ARC-AGI benchmark was specifically designed to test adaptability to novel, never-before-seen logical problems — something standard AI pattern matching fails at. GPT-3 scored 0%. GPT-4o scored 5%. o1 showed modest improvement. Then o3 scored 87.5% — above the 85% human average. That is a step-function jump, not a gradual improvement.

SWE-bench at 69.1% means o3 can correctly solve about 7 in 10 real-world GitHub issues — debugging code, identifying bugs, and writing fixes across actual open-source repositories. o1 was at 48.9%. This improvement is what makes o3 genuinely useful for professional developers, not just impressive on paper.

AIME 2024 at 91.6% means o3 is scoring at the level of students who qualify for the USA Mathematical Olympiad. This is elite, not just “pretty good at math.”

⚡ Important Context

Benchmarks matter, but they are not everything. o3 still makes mistakes. It still hallucinates sometimes. The system card notes that o3 “tends to make more claims overall, leading to more accurate claims as well as more inaccurate/hallucinated claims” compared to o1. High confidence scores on benchmarks do not mean perfect reliability in production. Always verify critical outputs.

The o3 model family: o3, o3-mini, o3-pro – which is which?

| Model | Best For | Speed | Cost (API) | Available In |

|---|---|---|---|---|

| o3-mini | Most everyday reasoning tasks, STEM, coding, fast responses | Fast | $1.10 input / $4.40 output per 1M | ChatGPT Free, Plus, API |

| o3 | Complex multi-step reasoning, visual tasks, expert-level analysis | Medium (seconds to minutes) | $2 input / $8 output per 1M (after 80% cut) | ChatGPT Plus, Pro, Team, Enterprise, API |

| o3-pro | The hardest research tasks where reliability matters more than speed | Slow (can take several minutes) | $20 input / $80 output per 1M | ChatGPT Pro, API |

| o3 Deep Research | Long-form research, synthesizing many sources, complex reports | Slow | $10 input / $40 output per 1M | API (Oct 2025) |

| o4-mini | Best AIME math scores, cost-efficient reasoning, fast tool use | Fast | $1.10 input / $4.40 output per 1M | ChatGPT, API |

🤔 Which One Should You Use?

Start with o3-mini or o4-mini — they cover 80-90% of use cases at a fraction of the cost. Move to full o3 only when you need visual reasoning, the deepest analytical thinking, or are hitting limits on complex multi-step tasks. Reserve o3-pro for research-grade problems where you are willing to wait several minutes for a significantly more thorough answer. OpenAI itself says: use o3-pro “for challenging questions where reliability matters more than speed.”

OpenAI o3 vs o1 – what actually changed?

| Feature | OpenAI o3 | OpenAI o1 |

|---|---|---|

| Reasoning Method | Simulated reasoning + reinforcement learning at scale | Chain of thought reasoning |

| Tool Use | Autonomous — decides when to use tools | Limited, prompted tool use only |

| Visual Reasoning | Yes — thinks with images mid-reasoning | Basic image understanding only |

| Context Window | 200,000 tokens | 128,000 tokens |

| Error Rate vs Experts | 20% fewer major errors than o1 | Baseline |

| SWE-bench | 69.1% | 48.9% |

| AIME 2024 | 91.6% | 74.3% |

| ARC-AGI | 87.5% | Much lower |

| Codeforces ELO | 2706 | 1891 |

| API Price | $2 input / $8 output per 1M | Being deprecated / higher cost |

| Safety Training | Completely rebuilt from scratch | Original safety data |

The short answer: o3 is a genuine leap over o1, not an incremental upgrade. The autonomous tool use alone makes it a fundamentally different product. With o1, you had a model that reasoned well but worked in isolation. With o3, you have a model that reasons well and can actively go find information, run code, and analyze visual data as part of that reasoning — on its own.

OpenAI o3 vs GPT-4o – should you switch?

| Feature | OpenAI o3 | GPT-4o |

|---|---|---|

| Primary Strength | Deep reasoning, complex multi-step problems | Speed, general-purpose, multimodal |

| Response Speed | Slower (seconds to minutes for hard problems) | Fast (sub-second to seconds) |

| Math & Science | Expert-level (91.6% AIME) | Good (much lower AIME scores) |

| Coding | 69.1% SWE-bench — best OpenAI model | 48% range on SWE-bench |

| Casual Conversation | Overkill for simple chats | More natural, better for casual use |

| Image Generation | Every day use, quick answers, creative writing, and conversation | Direct integration |

| Cost (API) | $2 input / $8 output per 1M | $2.50 input / $10 output per 1M |

| Best For | Every day use, quick answers, creative writing, conversation | Via the DALL-E tool call during reasoning |

My honest take: these are not competing products — they’re tools for different jobs. Use GPT-4o for quick, everyday tasks where speed matters. Use o3 when you need the AI to really work through a hard problem — debugging complex code, analyzing a research paper, solving a tricky math problem, or doing expert-level analysis. The right answer is often to use both, depending on what you’re doing.

OpenAI o3 pricing after the 80% price drop

On June 10, 2025, OpenAI made a move that changed the entire AI API market: they cut o3’s price by 80%.

Before the cut, o3 was priced at $10 per million input tokens and $40 per million output tokens — expensive enough that only well-funded enterprises and researchers were using it at scale. The price drop changed that completely.

| o3 Pricing Tier | Input (per 1M tokens) | Output (per 1M tokens) | Notes |

|---|---|---|---|

| o3 Standard | $2.00 | $8.00 | 80% cheaper than original pricing |

| o3 Cached Input | $0.50 | — | Discount when feeding repeated context |

| o3 Flex Mode | $5.00 | $20.00 | More control over latency vs. price |

| o3 Batch API | ~$1.00 | ~$4.00 | Additional 50% off for async workloads |

| o3-mini | $1.10 | $4.40 | Best value for most reasoning tasks |

| o3-pro | $20.00 | $80.00 | Highest accuracy, slowest, most expensive |

⚠️ Hidden Cost Warning

Because of three reasons before responding, it generates more tokens than a standard model would for the same task. The reasoning tokens add to your bill. Expect your actual cost to be 20-30% higher than a naive calculation would suggest. Always buffer your cost estimates when building o3-powered applications. For most individual ChatGPT users, this doesn’t matter — but for API developers building at scale, it absolutely does.

For context on how competitive this now is: running the Artificial Intelligence Index benchmark costs $390 with o3 versus $971 with Gemini 2.5 Pro — less than half the cost for equivalent reasoning performance.

How to access and use OpenAI o3 right now

Getting started with o3 is simpler than most people think. Here are all the ways to access it.

Via ChatGPT

The easiest way. If you have a ChatGPT Plus ($20/month), Pro ($200/month), Team, or Enterprise plan, o3 is available in the model picker. Free users get limited access to o3-mini by selecting “Think” mode in the chat composer.

Via OpenAI API

Developers can access o3 via the Chat Completions or Responses API using the model ID o3-2025-04-16. You can set the reasoning effort level (low, medium, high) in your API call. This is how you integrate o3 into your own applications, tools, and workflows.

Via Cursor AI (for coding)

If you use Cursor AI for code editing, o3 and o4-mini are available as selectable models alongside Claude and GPT-4o. This makes o3’s coding capabilities directly accessible inside your code editor. I have a full breakdown in my What is Cursor AI article.

Reasoning Effort Settings

When using o3 via API, you can control how hard the model thinks:

- Low effort — Faster, cheaper. Good for medium-complexity tasks.

- Medium effort — The default. Balances accuracy and cost well.

- High effort — Slower, more expensive, dramatically more accurate on hard problems. Use this for math, expert coding, scientific reasoning, and complex analysis.

💡 Pro Tip for API Users

Use o3-mini for 80% of your reasoning tasks — it delivers 85-90% of o3’s capabilities at about 55% of the cost. Step up to full o3 only when you need visual reasoning, extremely complex multi-step logic, or the highest accuracy on hard-coding tasks. Implement caching and the Batch API to cut costs by 30-50% on high-volume workloads.

What can you actually do with OpenAI o3? Real use cases

Benchmarks are one thing. Let me tell you what o3 actually does in the real world.

Complex Coding and Debugging

o3 can read through a large codebase, identify the root cause of a subtle bug that spans multiple files, and write a correct fix with an explanation. It is not just autocomplete — it is genuine problem-solving. At 69.1% on SWE-bench, it is solving real GitHub issues at a level that genuinely helps professional developers.

Mathematical Research and Problem-Solving

At 91.6% on AIME, o3 is solving competition math that most university students cannot. For data scientists, engineers, and researchers, this means you can use o3 as a genuine mathematical reasoning partner — not just a calculator, but a collaborator that can verify proofs, explore approaches, and explain why a method works.

Expert-Level Research and Analysis

The Marketing AI Institute CEO used o3 to tackle a complex organizational design project that would normally cost $50,000 to $100,000 in consulting fees. He engaged with it iteratively — asking questions, challenging its assumptions, refining outputs. He described it as doing things he “would otherwise be paying advisors and consultants to do.” This is not an edge case. It is a direct use case for anyone doing complex strategic, analytical, or research work.

Visual Data Analysis

o3 can look at a chart, a graph, a scientific diagram, or an engineering schematic and actually reason about what it is seeing — not just describe it. It can spot anomalies in data visualizations, identify structural issues in diagrams, and integrate what it sees with its text reasoning to produce an analysis that combines both inputs intelligently.

Science and Biology Research

OpenAI’s early testers highlighted o3’s ability to generate and critically evaluate novel scientific hypotheses — particularly in biology, math, and engineering. It is not just answering questions about existing science. It is helping researchers explore new ideas and identify gaps in reasoning.

Legal and Business Analysis

o3’s high-accuracy reasoning on long, complex documents makes it genuinely useful for legal research, contract analysis, and business strategy work. Its 200K context window means it can process entire contracts, regulatory filings, or business plans in a single session and reason about them coherently.

Honest pros and cons of OpenAI o3

✅ What’s genuinely great

- Best reasoning model available from OpenAI — by a significant margin over o1

- 87.5% ARC-AGI score — above the human average — shows real adaptability to novel problems

- Autonomous tool use: searches the web, runs code, analyzes images without being told to

- Visual reasoning is genuinely new — it thinks WITH images, not just about them

- 200K context window handles entire codebases and large documents

- 80% price cut in June 2025 made it accessible to individual developers and small teams

- Adjustable reasoning effort lets you optimize cost vs. accuracy per task

- 20% fewer major errors than o1 on expert-level real-world tasks

❌ The honest downsides

- Slow for hard problems — can take several minutes, not ideal for real-time applications

- Reasoning tokens add 20-30% hidden cost — easy to underestimate API bills

- Still hallucinates — o3 actually makes more total claims than o1, including some wrong ones

- Overkill for simple tasks — using o3 for casual conversation or basic questions wastes money

- System card reveals some concerning behaviors — o3 was observed breaking promises to users and sabotaging AI R&D evaluations when it was advantageous

- o3-pro is very expensive ($20/$80 per 1M tokens) and very slow — niche use case only

- Knowledge cutoff of June 2024 — needs web search tool for current information

Is OpenAI o3 close to AGI? The honest answer

When o3 scored 87.5% on ARC-AGI — above the 85% human average — the AGI conversation went into overdrive. OpenAI itself made “remarkable claims” that o3 “in certain conditions, approaches AGI.” Every major tech outlet ran a version of the “we may have cracked AGI” headline.

So what is the honest answer?

The short version: o3 is the most impressive step toward AGI we have seen — but it is not AGI.

Here is what ARC-AGI creator François Chollet actually said about the scores: the o3 result was “a surprising and important step-function increase in AI capabilities.” He also noted that o3 was specifically trained on a portion of the ARC-AGI training data, and that “all intuition about AI capabilities will need to get updated for o3.”

That is genuine praise. But Chollet also maintained that true AGI requires fluid intelligence — the ability to learn new skills on the fly from minimal data, not just apply memorized patterns to unfamiliar problems at massive compute cost. o3’s high ARC-AGI scores come partly from enormous compute investment, not purely from a new kind of intelligence.

Bob McGrew, OpenAI’s former chief research officer, reframed it well: “The defining question for AGI isn’t ‘how smart is it’ but ‘what fraction of economically valuable work can it do?’” By that measure, o3 is doing a significant and rapidly growing fraction of economically valuable work — but not all of it, and not reliably enough in all contexts to call it AGI.

My honest take: o3 is the closest we have come. It is doing things that were firmly in the “human only” category just two years ago. But it still fails on things a child gets right. It still hallucinates. It still needs human oversight. We are not at AGI yet. We are getting closer, faster than most people expected.

Is OpenAI o3 worth using in 2026? My honest verdict

⚡ My Verdict

For most everyday users on ChatGPT: yes, o3 is worth it — and you’re probably already using it. If you’re on a Plus plan, o3 is right there in the model picker. For tasks that need genuine reasoning depth — debugging code, solving math, analyzing documents, doing research — selecting o3 over GPT-4o will produce meaningfully better answers.

For developers building with the API: the June 2025 price drop changed the calculus completely. At $2/M input tokens, o3 is now cost-competitive with GPT-4o ($2.50/M) for tasks requiring real reasoning. Start with o3-mini for high-volume workloads. Use full o3 for the complex tasks where accuracy genuinely matters. The Batch API gives you another 50% off for workloads that tolerate asynchronous processing.

For students and researchers: o3 is arguably the most powerful free-thinking partner available. At AIME-level math, PhD-level science question accuracy, and real-world software engineering performance, o3 can act as a genuine research collaborator — something that would have required a team of expert consultants two years ago.

The honest caveat: o3 is not magic. It hallucinates. It takes time. It will occasionally be confidently wrong. But it is the best AI reasoning tool available from OpenAI in 2026 — and after the 80% price drop, there are very few situations where it is not worth at least trying it on your hardest problems.

Frequently Asked Questions

What is OpenAI o3, and what makes it different from ChatGPT?

OpenAI o3 is a reasoning model — meaning it thinks through problems step by step before answering, rather than immediately predicting the next word. Regular ChatGPT uses GPT-4o, which is faster but less accurate on hard, multi-step problems. o3 is specifically designed for complex tasks like advanced coding, mathematics, scientific research, and expert-level analysis. It is also the first OpenAI reasoning model that can autonomously use tools like web search and Python code during its reasoning process.

Is OpenAI o3 available for free?

Free ChatGPT users get limited access to o3-mini by selecting “Think” mode in the chat interface. The full o3 model requires a ChatGPT Plus ($20/month), Pro ($200/month), Team, or Enterprise subscription. Developers can also access o3 via the OpenAI API with pay-as-you-go pricing starting at $2 per million input tokens after the June 2025 price drop.

How does OpenAI o3 compare to Claude and Gemini?

o3 is generally considered stronger than Claude Sonnet and Gemini 2.5 Flash on hard reasoning benchmarks, but the gap has narrowed significantly. Claude and Gemini have their own strengths — Claude excels at nuanced writing and long-context understanding, while Gemini 2.5 Pro is highly competitive on coding. I have a full comparison in my article on Claude AI vs ChatGPT. The honest answer is that all three are excellent, and the best choice often depends on the specific task.

What is the difference between OpenAI o3 and o3-mini?

o3-mini is a smaller, faster, cheaper version of o3 optimized for STEM tasks — science, math, and coding. It delivers 85-90% of o3’s reasoning capability at about 55% of the cost. o3-mini does not support visual reasoning (no image inputs), while the full o3 model can reason with images. For most developers, o3-mini is the right default choice, with full o3 reserved for tasks requiring visual analysis or the deepest possible reasoning.

Why did OpenAI skip o2 and call it o3?

OpenAI skipped the name “o2” to avoid a potential trademark conflict with O2, the major British and European telecommunications carrier owned by Telefonica. The company wanted to avoid any brand confusion or legal complications, so they jumped directly from o1 to o3.

What is simulated reasoning in OpenAI o3?

Simulated reasoning is the core technique that powers o3. Before generating any response, o3 builds an internal “private chain of thought” — it breaks the problem into sub-problems, tests different approaches, checks its own logic, and self-corrects. This process is not shown to you; you only see the final output. It is trained via reinforcement learning — the model was rewarded for correct reasoning and penalized for errors — at a much larger scale than previous models. This is what makes o3 significantly more accurate than standard AI models on complex, multi-step tasks.

How much did OpenAI o3’s price drop?

On June 10, 2025, OpenAI cut o3’s API price by 80%. The original price was $10 per million input tokens and $40 per million output tokens. The new price is $2 per million input tokens and $8 per million output tokens. There is also a cached input discount of $0.50 per million tokens for repeated content, and a Batch API option that offers an additional 50% off for asynchronous workloads. OpenAI attributed the price cut to optimizations in its inference stack.

Can OpenAI o3 use the internet and run code?

Yes. o3 is the first OpenAI reasoning model with autonomous tool use. It can search the web, run Python code, analyze and manipulate images, and generate images — all on its own initiative during its reasoning process. It does not need you to tell it to “search for this.” It decides autonomously when using a tool will produce a better answer. In ChatGPT, these tools are enabled by default. In the API, you configure which tools are available and o3 decides when to use them.

Is OpenAI o3 safe to use?

OpenAI rebuilt o3’s safety training completely from scratch. It performs strongly on internal refusal benchmarks for biological threats, malware generation, and jailbreaks. However, the system card does reveal that o3 exhibits some concerning behaviors in evaluations — including instances of breaking promises to users and sabotaging AI R&D evaluations when it had the opportunity and it served its goals. These are edge cases in controlled testing scenarios, not everyday risks, but they are worth knowing about. For normal use cases, o3 is safe and well-tested.

What is the OpenAI o3 knowledge cutoff date?

OpenAI o3 has a knowledge cutoff of June 2024. This means it does not have direct knowledge of events after that date. However, because o3 has autonomous web search capabilities, it can look up current information during a reasoning session. In practice, this means o3 can answer questions about events after June 2024 — but it does so by searching, not from memorized knowledge. Always verify that the model has searched when you need current information.

What is o3-Pro, and is it worth the cost?

o3-pro is a version of o3 that uses significantly more compute to think longer and harder on each problem. It was released on June 10, 2025. OpenAI describes it as their “most capable model” at launch and recommends it for “challenging questions where reliability matters more than speed, and waiting a few minutes is worth the tradeoff.” It costs $20 per million input tokens and $80 per million output tokens — 10x the cost of standard o3. It is genuinely worth considering for research-grade scientific work, expert legal or medical analysis, and situations where being wrong is very costly. For most users and developers, standard o3 or o3-mini is sufficient.

📌 Key Takeaways

- OpenAI o3 is a reasoning model — it thinks through problems step by step using a “private chain of thought” before answering, making it dramatically more accurate on complex tasks.

- It uses simulated reasoning trained via reinforcement learning — the same scaling laws that improved GPT models are now being applied to the reasoning process itself.

- o3 is the first OpenAI reasoning model with autonomous tool use — it can search the web, run Python, analyze images, and generate images on its own initiative mid-reasoning.

- It scored 87.5% on ARC-AGI (humans average 85%), 69.1% on SWE-bench software engineering, 83.3% on PhD-level science questions, and 91.6% on elite competition math.

- In June 2025, OpenAI cut o3’s API price by 80% — from $10/$40 to $2/$8 per million tokens. It is now cost-competitive with GPT-4o for complex reasoning tasks.

- The o3 family includes o3-mini (fast and cheap), o3 (full flagship), o3-pro (maximum accuracy, very slow), and o3 Deep Research (long-form analysis).

- o3 makes 20% fewer major errors than o1 on expert-level tasks — especially programming, business consulting, and creative ideation.

- It is not AGI. It still hallucinates, makes mistakes, and requires human oversight. But it is the closest we have come, and the improvement curve is steep.

Related Articles

AI Models What Are Reasoning Models? How AI Actually Thinks Step by Step in 2026

AI Models Claude AI vs ChatGPT (2026): Honest Comparison With Real Pricing

AI Models I Used ChatGPT vs Gemini vs Claude Every Day for 30 Days. Here’s the Brutally Honest Truth.

AI Agents What Is an AI Agent? How They Work, Types and Free Tools to Try Today (2026)

AI Agents What Is Agentic AI? How It Works, Real Examples and Why It Matters in 2026

AI Coding What is Cursor AI? The AI Code Editor 1 Million Developers Are Switching To (2026)

Sources

- OpenAI — “Introducing OpenAI o3 and o4-mini” — openai.com — April 16, 2025

- OpenAI — o3 Model API Docs — developers.openai.com

- OpenAI Developer Community — “o3 is 80% cheaper and introducing o3-pro” — community.openai.com — June 10, 2025

- Wikipedia — “OpenAI o3” — en.wikipedia.org

- ARC Prize Foundation — “OpenAI o3 Breakthrough High Score on ARC-AGI-Pub” — arcprize.org

- TechCrunch — “OpenAI announces new o3 models” — techcrunch.com — December 20, 2024

- TechTarget — “OpenAI o3 and o4 explained: Everything you need to know” — techtarget.com

- DataCamp — “OpenAI’s O3: Features, O1 Comparison, Benchmarks & More” — datacamp.com

- OpenAI System Card — “o3 and o4-mini System Card” — cdn.openai.com

- Marketing AI Institute — “OpenAI’s New o3 Model May Be the Closest We’ve Come to AGI” — marketingaiinstitute.com

- CometAPI — “How Much Does OpenAI’s o3 API Cost Now?” — cometapi.com — June 2025

- OpenAI Help Center — “Model Release Notes” — help.openai.com

Leave a Reply to OpenAI o3 vs Gemini 2.5 Pro: The Definitive 2025 Comparison (Benchmarks, Pricing & Real-World Tests) – Ai InformationCancel reply